Software drives growth, customer experience, and competitive advantage, yet a single unnoticed defect can disrupt operations, expose security gaps, and erode trust. As systems grow more complex and release cycles accelerate, relying on last-minute checks or fragmented testing practices only increases business risk. The cost of fixing failures after launch is significantly higher than preventing them during development, but many organizations still treat testing as a technical afterthought rather than a strategic discipline.

This article will talk about what software testing is, why it matters for businesses, its core approaches, types, levels, and life cycle, so you can build reliable, secure, and scalable software with clarity and confidence.

TL;DR

- Software testing ensures product quality and reduces business risk.

- There are two main testing approaches: manual and automated.

- Functional testing validates business logic, while non-functional testing ensures performance, security, and usability.

- Testing levels (unit, integration, system, acceptance) structure quality assurance throughout development.

- There are 7 key phases in The Software Testing Life Cycle (STLC), providing a structured testing process.

- Shift-left testing, CI/CD integration, automation strategy, and risk-based testing are critical for faster, high-quality releases.

- Whether you need dedicated QA teams, automation expertise, or end-to-end software testing services, Sunbytes supports businesses across Europe, the US, and the UK with scalable and security-first testing solutions.

What Is Software Testing?

Software testing is the structured process of evaluating a software application to ensure it functions as intended, meets defined requirements, and performs reliably under real-world conditions. In simple terms, it verifies that what was built works, and works correctly.

The core objective of software testing is threefold: detect defects early, assure consistent quality, and reduce business risk. It validates business logic, confirms system behavior, and ensures the product aligns with user expectations before release. More importantly, it creates measurable control over quality rather than leaving outcomes to chance.

Software testing is a critical phase within the Software Development Life Cycle (SDLC). If you want to understand how testing aligns with broader development phases, read our guide on What Is Software Development Life Cycle (SDLC).

Why Is Software Testing Important for Businesses?

Software failures are business liabilities. A single production defect can disrupt operations, expose security vulnerabilities, and damage customer trust in minutes.

- Cost of fixing bugs early vs. late: Defects identified during development are significantly cheaper to resolve than those discovered after release. The later a bug is found, the higher the cost—financially and reputationally.

- Risk reduction: Testing minimizes operational, financial, and compliance risks. It ensures systems behave predictably under expected and unexpected conditions.

- Security & compliance: With increasing regulatory and cybersecurity pressure, untested software becomes a liability. Structured testing helps prevent data breaches, compliance violations, and legal exposure.

- Customer trust & user experience: Users expect seamless performance. Slow load times, crashes, or inconsistent functionality directly impact retention and brand credibility.

- Faster time-to-market: Paradoxically, disciplined testing accelerates delivery. Clear validation processes reduce rework, firefighting, and last-minute instability.

- Brand reputation: In competitive markets, reliability becomes a differentiator. Businesses known for stable, secure software earn long-term trust and strategic advantage.

Software testing, therefore, is not an operational expense. It is a governance mechanism that protects growth, reputation, and scalability.

What Are the Main Approaches to Software Testing?

Modern software testing is typically executed through two primary approaches: manual testing and automated testing. Each serves a distinct purpose, and choosing the right approach depends on project scope, complexity, release frequency, and risk tolerance.

Manual Testing

Manual testing involves human testers executing test cases without automation tools. It is particularly valuable when evaluating user experience, exploratory scenarios, and complex workflows that require contextual judgment.

Manual testing is most effective:

- During early product validation (e.g., MVP stage)

- For usability and exploratory testing

- When requirements change frequently

- When automation ROI is not yet justified

Advantages

- Flexible and adaptable

- Effective for discovering unexpected issues

- Strong for UX validation

Limitations

- Time-consuming for repetitive tasks

- Less scalable for large regression cycles

- Higher long-term operational cost

Manual testing remains essential in exploratory and usability-focused scenarios. For a deeper breakdown, explore our guide on Manual Testing: Process, Benefits & Use Cases.

Automated Testing

Automated testing uses scripts and tools to execute predefined test cases repeatedly and consistently. It is particularly powerful in regression testing and high-frequency release environments.

Automation becomes critical when:

- Releases are frequent (Agile/DevOps environments)

- Large regression suites must run repeatedly

- Stability and consistency are priorities

- Long-term scalability is required

Advantages

- Faster execution

- Scalable regression coverage

- Improved consistency

- Strong integration with CI/CD pipelines

Limitations

- Higher initial setup cost

- Requires technical expertise

- Not ideal for all testing types (e.g., exploratory UX)

Automation becomes significantly more effective when integrated into a CI/CD pipeline. Learn more in our guides on Automation Testing and What Is CI/CD Pipeline?

Manual vs Automated Testing

Manual and automated testing are not competing strategies—they are complementary.

A balanced testing framework often combines:

- Manual testing for exploration and usability

- Automated testing for regression and scalability

| Criteria | Manual Testing | Automated Testing |

| Speed | Slower | Faster |

| Cost (Short Term) | Lower | Higher |

| Cost (Long Term) | Higher | Lower |

| Scalability | Limited | High |

| Best For | UX & exploratory | Regression & CI/CD |

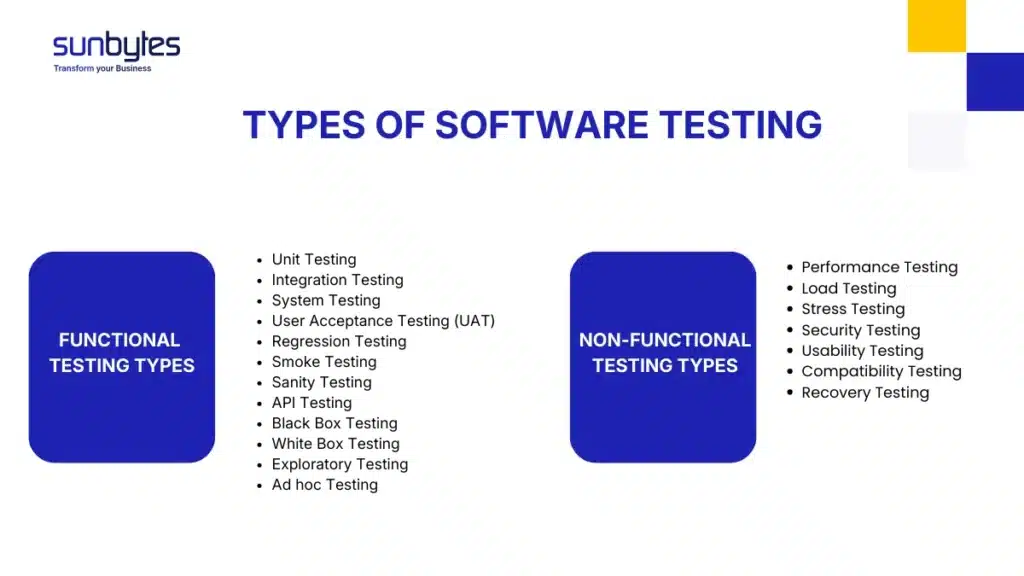

What Are the Different Types of Software Testing?

Software testing types define what is being validated within a system. They focus on verifying specific aspects of functionality, performance, security, and usability.

It is important to distinguish types from levels of testing.

- Types refer to the purpose of validation (e.g., performance, security, regression).

- Levels refer to when and where testing occurs in the development process (e.g., unit, integration, system).

Broadly, software testing types fall into two main categories: functional testing and non-functional testing. Together, they ensure both business logic correctness and system reliability under real-world conditions.

Functional Testing Types

Functional testing validates that the software behaves according to defined business requirements. It answers the question: Does the system do what it is supposed to do?

Each type below should be structured with definition, purpose, and example in the full article.

- Unit Testing: Verifies individual components or functions in isolation.

Example: Testing a calculation method independently before integration. - Integration Testing: Ensures multiple modules work correctly together.

Example: Verifying that payment processing integrates properly with order management. - System Testing: Validates the complete system as a whole.

Example: Testing the entire e-commerce workflow from login to checkout. - User Acceptance Testing (UAT): Confirms the system meets business and user expectations before release.

Example: End users validating real-world scenarios. - Regression Testing: Ensures new changes do not break existing functionality.

Example: Re-testing core features after a feature update. - Smoke Testing: Quick, high-level validation of critical functionality after a new build.

Example: Verifying that the application launches and core modules load. - Sanity Testing: Focused validation after minor changes or bug fixes.

Example: Confirming a resolved defect does not affect related features. - API Testing: Validates communication between system components or services.

Example: Testing API responses for accuracy and performance. - Black Box Testing: Tests functionality without visibility into internal code structure.

Focus: input vs output validation. - White Box Testing: Tests internal logic and code structure.

Focus: paths, conditions, and internal flows. - Exploratory Testing: Unscripted testing based on tester intuition and experience.

Focus: uncovering unexpected issues. - Ad Hoc Testing: Informal, unstructured testing without predefined cases.

Focus: quick defect discovery.

Functional testing ensures that the system fulfills its intended purpose from a business perspective.

Non-Functional Testing Types

Non-functional testing validates how well the system performs rather than what it does. It ensures stability, scalability, security, and user satisfaction under varying conditions.

- Performance Testing: Evaluates system responsiveness and speed under expected workloads.

- Load Testing: Measures system behavior under anticipated user traffic.

- Stress Testing: Pushes the system beyond capacity to identify breaking points.

- Security Testing: Identifies vulnerabilities and protects against unauthorized access or data breaches.

- Usability Testing: Assesses ease of use and user experience.

- Compatibility Testing: Ensures the software functions across devices, browsers, and operating systems.

- Recovery Testing: Validates the system’s ability to recover after crashes or failures.

While functional testing protects business logic, non-functional testing protects user trust and operational stability. Both are essential for delivering reliable software at scale.

What Are the Different Levels of Software Testing?

Testing levels follow a logical progression within the Software Development Life Cycle (SDLC). Each level builds upon the previous one, increasing scope and system complexity. Understanding these levels helps organizations assign responsibility clearly and prevent defect escalation.

At each level, different stakeholders are involved, from developers to QA engineers to business users.

Unit Testing

Unit testing validates the smallest testable components of an application, typically individual functions or methods. It is primarily performed by developers during the coding phase.

Purpose:

- Detect defects early

- Validate internal logic

- Prevent issues from propagating to higher levels

By isolating components, unit testing creates a strong quality foundation and reduces downstream rework.

Integration Testing

Integration testing verifies that multiple modules or services work together as intended. It is usually performed by QA teams or developers once individual units are validated.

Purpose:

- Detect interface defects

- Validate data flow between components

- Ensure correct system interactions

As systems grow more modular and service-based, integration testing becomes increasingly critical.

System Testing

System testing evaluates the complete, integrated application in an environment that closely mirrors production. It is typically conducted by QA teams.

Purpose:

- Validate end-to-end workflows

- Ensure compliance with business requirements

- Test both functional and non-functional aspects

At this level, the system is assessed as a whole rather than as individual parts.

Acceptance Testing

Acceptance testing confirms whether the system is ready for release from a business perspective. It is often performed by end users, stakeholders, or product owners.

Purpose:

- Validate real-world use cases

- Confirm alignment with business goals

- Provide final approval before deployment

Acceptance testing acts as the final quality gate before software reaches customers. Together, these levels create a structured testing hierarchy that detects defects early, minimizes risk, and maintains quality control throughout development.

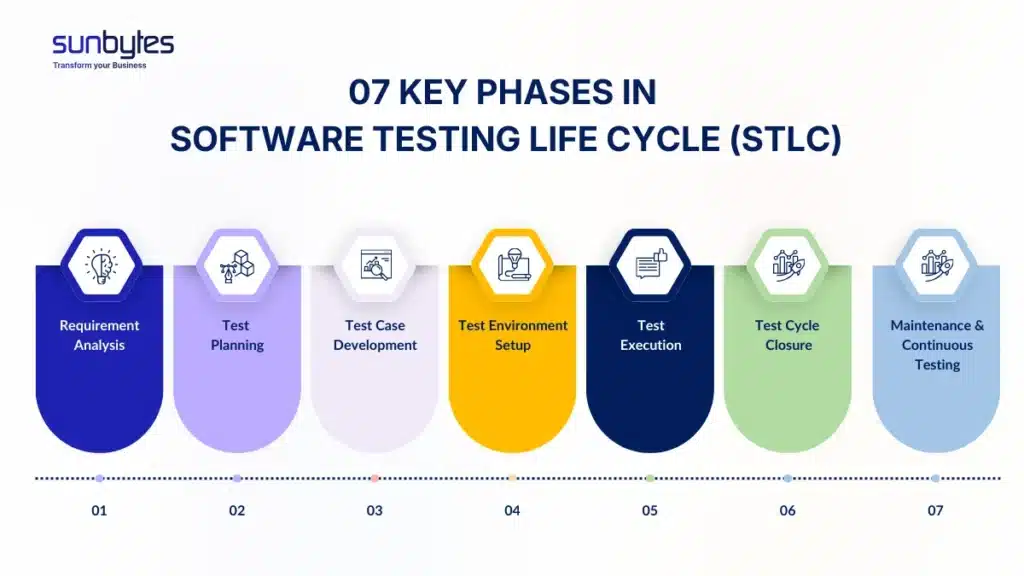

What Are Key Phases in Software Testing Life Cycle (STLC)?

The Software Testing Life Cycle (STLC) defines the structured process used to plan, execute, and manage testing activities. While testing levels describe where validation happens, STLC defines how testing is organized and controlled.

It operates alongside the Software Development Life Cycle (SDLC), ensuring that quality assurance aligns with each development phase. A clearly defined STLC introduces predictability, accountability, and measurable quality outcomes.

Below are the seven key phases that form a disciplined testing framework.

Phase 1: Requirement Analysis

Testing begins long before execution. In this phase, teams review functional and non-functional requirements to determine what can and should be tested.

Focus areas:

- Reviewing functional requirements

- Identifying testable conditions

- Detecting ambiguities or gaps

- Conducting initial risk identification

Early clarity prevents downstream defects.

Phase 2: Test Planning

This phase defines the strategic blueprint for testing.

Focus areas:

- Creating the test strategy document

- Resource allocation and role definition

- Timeline estimation

- Tool selection

- Test environment planning

A strong test plan establishes governance and sets measurable expectations.

Phase 3: Test Case Development

Test cases translate requirements into executable validation steps.

Focus areas:

- Writing detailed test cases

- Preparing test scenarios

- Creating and managing test data

- Conducting review and approval cycles

Well-designed test cases ensure consistency and traceability.

Phase 4: Test Environment Setup

Testing cannot proceed without a stable environment that mirrors production conditions.

Focus areas:

- Infrastructure setup

- Tool configuration

- Access management

- Data preparation

Environment stability directly impacts test reliability.

Phase 5: Test Execution

This is where validation formally occurs.

Focus areas:

- Executing manual and automated test cases

- Recording outcomes

- Logging and tracking defects

- Retesting resolved issues

Execution must be disciplined, documented, and measurable.

Phase 6: Test Cycle Closure

Once testing objectives are met, the cycle is formally closed.

Focus areas:

- Preparing test summary reports

- Analyzing metrics and KPIs

- Evaluating defect trends

- Documenting lessons learned

This phase converts testing data into strategic insights.

Phase 7: Maintenance & Continuous Testing

Quality assurance does not end at release. As software evolves, testing must adapt.

Focus areas:

- Validating updates, patches, and new features

- Maintaining regression coverage

- Ensuring system stability over time

In Agile and DevOps environments, this phase enables continuous quality control. A structured STLC ensures testing is proactive, controlled, and aligned with business objectives—not reactive firefighting.

What Are the Most Popular Software Testing Models?

Testing models define how quality assurance is structured within a broader development methodology. While the STLC describes the phases of testing, testing models describe the architectural logic behind how validation aligns with development.

Choosing the right testing model depends on project complexity, regulatory requirements, system architecture, and release frequency. A misaligned model often results in inefficiencies, delayed releases, or quality gaps.

Below are the most widely adopted testing models in modern software development.

V-Model

The V-Model (Verification and Validation Model) aligns each development phase with a corresponding testing phase. Testing activities are planned in parallel with development.

Structured verification & validation: Each requirement has a matching validation stage, ensuring traceability from specification to delivery.

Pros:

- Clear documentation

- Strong traceability

- Predictable structure

- Suitable for regulated industries

Cons:

- Limited flexibility

- Less adaptable to changing requirements

Best suited for:

- Enterprise systems

- Healthcare, finance, and government projects

- Compliance-heavy environments

The V-Model emphasizes control and predictability—key in high-risk domains.

Test Pyramid Model

The Test Pyramid model prioritizes a higher volume of unit tests, fewer integration tests, and minimal UI tests.

- Core principle: Invest heavily in fast, low-level tests to reduce dependency on slower, fragile UI validation.

- Heavy unit testing: Ensures early defect detection and cost efficiency.

- Fewer UI tests: Reduces maintenance overhead and instability.

- Cost efficiency: Lower execution time and faster feedback loops support Agile and DevOps environments.

The Test Pyramid is widely adopted in modern continuous delivery pipelines.

Honeycomb Testing Model

The Honeycomb model addresses testing in distributed and microservices-based architectures.

- Microservices architecture focus: Validates each service independently.

- Distributed systems validation: Ensures reliable service-to-service communication.

- Service-to-service testing: Prioritizes API and integration stability over UI dependency.

As organizations move toward cloud-native systems, the Honeycomb model provides architectural alignment.

Agile & DevOps Testing Approach

Modern software teams increasingly adopt Agile and DevOps methodologies, where testing is continuous rather than sequential.

- Continuous testing: Validation happens throughout development cycles.

- CI/CD integration: Automated tests run with every code change.

- Shift-left strategy: Testing begins at the requirement stage, not after development.

- Automation-first mindset: Scalable automation ensures rapid feedback without sacrificing quality.

This approach prioritizes speed, adaptability, and continuous quality governance—critical in competitive digital markets. Selecting the appropriate testing model ensures that quality assurance aligns with both technical architecture and business strategy.

What Are the Common Challenges in Software Testing?

Even with structured frameworks and modern tools, software testing presents operational and strategic challenges. If not managed proactively, these challenges can slow delivery, inflate costs, and weaken quality control.

Understanding these obstacles allows organizations to design more resilient testing strategies.

Flaky Tests

Automated tests that produce inconsistent results undermine trust in the testing process.

When teams cannot rely on test outcomes, they waste time re-running builds and investigating false failures. Over time, this erodes confidence in automation and slows release cycles.

Environment Inconsistencies

Testing environments that differ from production introduce unreliable results.

Configuration mismatches, unstable infrastructure, or incomplete data sets can generate misleading outcomes—either hiding real defects or producing false positives.

Stable environments are foundational to reliable testing.

Poor Requirements

Ambiguous or incomplete requirements lead to unclear test cases and validation gaps.

If business logic is not clearly defined, testing becomes reactive rather than structured. This often results in late-stage defect discovery and rework.

Clarity at the requirement stage directly impacts quality downstream.

Tight Deadlines

Compressed timelines frequently push testing to the end of the development cycle.

When testing is rushed or reduced, critical defects escape into production. Short-term speed may lead to long-term instability.

Disciplined planning prevents quality compromises under pressure.

Limited Automation Coverage

Relying heavily on manual regression testing restricts scalability.

As applications grow, manual-only approaches cannot keep pace with release frequency. Without balanced automation, teams face bottlenecks and increased human error.

Communication Gaps Between Development and QA

Siloed teams slow defect resolution and reduce efficiency.

When developers and testers operate independently without shared visibility, feedback loops lengthen. Collaborative processes and shared ownership are essential for sustained quality.

These challenges are not technical failures—they are process gaps. Organizations that proactively address them transform testing from a reactive function into a strategic quality engine.

What Are the Best Practices in Software Testing?

High-performing organizations do not treat testing as a final checkpoint. They embed it into their development strategy to create predictable quality and controlled scalability.

The following best practices strengthen both speed and reliability.

Shift-Left Testing

Testing should begin at the requirement and design stages—not after development is complete.

By validating assumptions early, teams reduce defect leakage and minimize costly rework. Early validation improves clarity, alignment, and long-term stability.

CI/CD Integration

Modern software environments require continuous validation.

Integrating automated tests into CI/CD pipelines ensures every code change is verified immediately. This reduces regression risk and shortens feedback loops, enabling faster and safer releases.

Test Automation Strategy

Automation should be intentional—not reactive. A balanced strategy defines:

- Which tests to automate

- Which to keep manual

- Tool selection criteria

- Maintenance planning

Effective automation prioritizes stability, speed, and scalability rather than automation volume alone.

Risk-Based Testing

Not all features carry equal business risk.

Prioritizing high-impact and high-risk areas ensures resources are allocated strategically. This approach strengthens protection where it matters most—security, financial transactions, and core workflows.

Test Data Management

Reliable testing depends on accurate and controlled data.

Well-managed test data ensures realistic validation while maintaining compliance and data protection standards.

Continuous Testing

In Agile and DevOps environments, testing is ongoing.

Continuous testing enables rapid iteration without sacrificing quality control. It supports evolving products and frequent releases.

Security-First Testing

Security validation must be integrated into testing workflows—not treated as a separate function.

Proactive vulnerability detection reduces exposure and protects both users and business operations.

Metrics & KPIs

Quality must be measurable. Tracking defect density, test coverage, pass rates, and cycle time provides visibility into performance and improvement opportunities. Data-driven insights transform testing from operational activity into strategic oversight. Organizations that adopt these practices build structured, scalable quality systems capable of supporting growth and innovation.

Why Choose Sunbytes for Software Testing Services?

Software testing requires more than executing test cases. It demands structure, domain expertise, and alignment with business objectives. At Sunbytes, we provide dedicated QA teams and end-to-end software testing services designed to ensure reliability, scalability, and security across the entire development lifecycle. From functional validation to performance and security testing, we help organizations implement disciplined quality control frameworks that reduce risk and support continuous delivery.

Why Sunbytes

Sunbytes is a Dutch technology company headquartered in the Netherlands, supported by a delivery hub in Vietnam. For over 14 years, we have partnered with organizations worldwide to strengthen digital execution—combining structured engineering practices with security-driven delivery.

- Digital Transformation Solutions: We design, build, and modernize digital products through senior engineering teams. From custom software development to QA, testing, and ongoing maintenance, we help businesses translate strategy into stable, high-performing systems.

- Cybersecurity Solutions: Quality without security creates exposure. Our security-focused services reduce operational risk while maintaining delivery speed—ensuring compliance readiness and proactive vulnerability management throughout the software lifecycle.

- Accelerate Workforce Solutions: Growth demands flexibility. We support scaling teams through recruitment and workforce solutions, enabling organizations to expand capabilities without compromising quality standards.

If you are evaluating how to strengthen your software quality strategy, speak with our experts to explore a testing approach aligned with your business goals.

FAQs

Manual testing relies on human execution and is ideal for exploratory, usability, and early-stage validation. Automated testing uses scripts and tools to execute repetitive tests efficiently, making it essential for regression and CI/CD environments. Most modern teams adopt a hybrid approach—manual for insight, automation for scalability.

Software testing focuses on identifying defects in a product. Quality Assurance (QA) is broader—it defines the processes, standards, and methodologies that prevent defects from occurring in the first place. Testing is a component of QA, while QA governs the overall quality strategy.

Software testing should begin during the requirement and design phases—a practice known as shift-left testing. Early involvement helps identify ambiguities, reduce rework, and lower defect resolution costs. Waiting until development is complete increases risk and delays delivery.

Startups benefit significantly from early testing because resources are limited and reputational damage can be costly. Structured testing improves MVP stability, enhances investor confidence, and prevents expensive rebuilds after launch.

There is no single “most important” testing type—it depends on the system and business risk. However, functional testing ensures core features work correctly, while security and performance testing protect user trust and operational stability. A balanced strategy aligned with business priorities is essential.

Let’s start with Sunbytes

Let us know your requirements for the team and we will contact you right away.